This article is the first in a series exploring how Agile thinking and AI intersect in practice—not as competing ideas, but as forces that now operate together inside the same systems of work. Much of the current conversation frames AI as a replacement: for roles, for practices, or even for Agile itself. My experience—both with clients and in my own work—suggests something more nuanced, and far more useful, is happening.

This article is the first in a series exploring how Agile thinking and AI intersect in practice—not as competing ideas, but as forces that now operate together inside the same systems of work. Much of the current conversation frames AI as a replacement: for roles, for practices, or even for Agile itself. My experience—both with clients and in my own work—suggests something more nuanced, and far more useful, is happening.

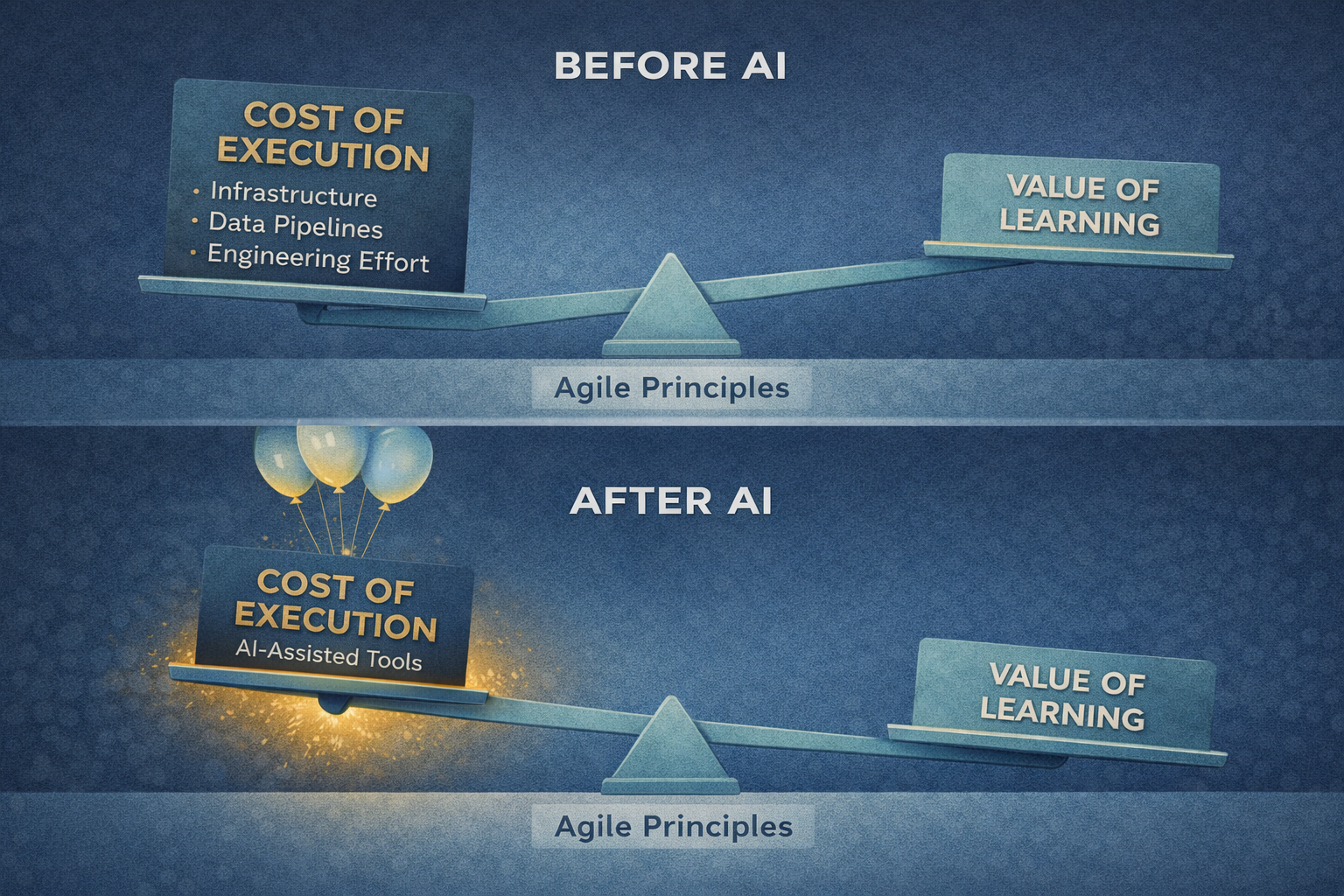

AI is fundamentally changing the economics of execution. And that shift is putting renewed emphasis on the Agile principles that were always meant to guide decision-making under uncertainty.

I want to start this series with a concrete example.

A Real Decision, Not a Thought Experiment

I recently finished a multi-year project building a new house in New England. The house was designed and built to Passive House standards, emphasizing airtightness, insulation, and controlled ventilation to dramatically reduce energy demand. While average temperatures here have been warming over time, winters can still include extended cold spells and the occasional very cold day.

The house is heated entirely with air-source heat pumps. For readers less familiar with them, a heat pump doesn’t create heat the way a furnace does. During the heating season, it extracts heat from the outside air and moves it indoors; during the cooling season, it runs in reverse, moving heat from inside the house to the outside. In our case, four air-source heat pumps serve different zones of the house.

During the design phase, I had an ongoing discussion with the HVAC engineer about how the system would perform in cold conditions. His position was straightforward: modern cold-climate heat pumps can keep up even as outdoor temperatures drop. I didn’t disagree—but that wasn’t the question I was trying to answer.

My concern was economic, not functional.

Heat pump efficiency is typically expressed as a coefficient of performance (COP), which measures how many units of heat are delivered for each unit of electricity consumed. At mild outdoor temperatures—around 50–60°F outside—a modern cold-climate heat pump might deliver three to four units of heat for every unit of electricity (a COP of roughly 3:1 to 4:1). As outdoor temperatures fall, that ratio declines. Around freezing, COPs often drop closer to 2:1, and at very cold temperatures—near 0°F or below—even high-performance “hyper-heat” systems can approach 1:1.

At that point, the system is still doing its job.The house stays warm.

But the economics change dramatically.

That distinction—capability versus cost—was the real question I wanted to answer.

Preserving Optionality

We had deliberately preserved an option. Outside the house, we left space and connections for a future exterior wood-fired boiler. That system would heat water and circulate it through hydronic lines, providing radiant floor heat in the basement and supplemental heating through coils in the air handlers. Electricity would be used primarily to run circulation pumps; the energy-intensive work of generating heat would come from wood.

Adding such a system would be expensive and would fundamentally change how the house operates in winter. I didn’t want to make that decision based on intuition, rules of thumb, or vendor claims. I wanted to understand, over a full heating season, whether there was a clear economic case for switching energy sources during the coldest periods of the year.

That question is what sent me down the path of collecting data.

From Intent to Insight: The Data Problem

I’m inherently a data-oriented decision-maker, and the house reflects that. It contains close to seventy independent control systems—covering energy monitoring, HVAC, lighting, ventilation, water management, solar, batteries, security, networking, and more.

Some of those systems store data in the cloud and expose it through consumer-facing applications. Others provide APIs that allow raw data to be extracted programmatically. Still others generate data that is technically accessible but poorly structured, inconsistently labeled, or difficult to correlate with anything else.

Individually, each system worked. Collectively, the data was fragmented.

To answer even a basic question—such as how energy consumption varied with outdoor temperature over time—I needed to combine weather data, circuit-level power usage, heat-pump runtime, indoor temperatures, and operating modes. None of that data lived in one place, and much of it wasn’t immediately usable.

The problem wasn’t a lack of information. It was the cost of turning information into insight.

Until recently, building even a basic version of this analytic system so I could start turning raw data into true insight would have required weeks to months of my effort—or hiring a team to do it. Data ingestion pipelines, storage layers, authentication, visualization frameworks, deployment infrastructure. All of that work would have been necessary just to begin answering the question.

None of it would have improved the quality of my thinking.

It was pure execution overhead.

This is where many good ideas stall—not because the intent is unclear, but because the cost of execution outweighs the value of learning.

When the Cost of Execution Collapsed

What changed wasn’t my question, my intent, or the complexity of the required analytical system.

What changed was the economics of execution.

To make this concrete, below is a screenshot of one of the dashboards I created. It shows one heat pump serving the main floor of the house over a 24-hour period. I am showing a day where the outside temperature dropped to -5°F.

This single view brings together outdoor temperature, indoor temperature and heat pump setpoint, real-time power draw, inferred operating states (light heat, normal heat, high heat, defrost), and aggregate energy usage over time.

Using AI-assisted coding tools (principally Claude, with some ChatGPT), I was able—in less than a day—to build dashboards like this, connecting to multiple systems through their APIs, pulling and normalizing data, correlating it across sources, and making it visible in ways that supported real decision-making. I could iterate quickly: refine calculations, add data sources, adjust assumptions, and test alternative views almost immediately.

This level of visibility allowed me to see, at a glance, how often the system was running in different heating modes, when defrost cycles were occurring, how power consumption spiked as outdoor temperatures dropped, and how all of that accumulated into real energy usage.

This is what I needed to answer the original question responsibly.

Not can the heat pumps keep up—they can—but what does it cost when they do?

With real data in hand, I could reason about thresholds, not guess at them.

And honestly, doing this was simply fun. There was genuine pleasure in being able to articulate a problem, move quickly to collecting the right data, and start evaluating it without weeks or months of setup work.

The distance between curiosity and insight collapsed.

What had once felt like a heavy, infrastructure-laden task became a lightweight, exploratory process. That sense of momentum—the ability to learn at the speed of thought—is easy to underestimate, but it matters.

The experience wasn’t about delegating thinking to a machine. It was about removing the friction between a clear question and a defensible answer.

A year earlier, I would have faced a trade-off: spend months building modern software infrastructure or outsource the work at significant cost. In either case, the effort required might easily have outweighed the value of the insight I was seeking.

AI fundamentally changed that equation.

Agile Principles in a Changed Environment

What stood out most in this experience wasn’t the sophistication of the tools. It was how familiar the underlying dynamics felt.

I had a real decision to make under uncertainty. I preserved optionality rather than committing too early. I deferred a costly, largely irreversible decision until I had enough information to make it responsibly. And I focused on learning quickly without committing disproportionate effort up front.

Those are Agile principles.

For years, Agile has focused on creating the conditions for making better decisions in uncertain environments: shortening feedback loops, reducing the cost of learning, and deferring irreversible commitments until the last responsible moment. The challenge has never been understanding those ideas. It has been the friction involved in applying them—especially in complex systems where learning can be expensive and experimentation carries real cost.

AI didn’t change those principles. It changed the environment in which they operate.

By dramatically reducing the cost and time required to move from intent to insight, AI shifts where the real constraints live. Execution is no longer the dominant bottleneck. The constraint moves upstream—to the quality of the questions being asked, the clarity of intent, and the ability to interpret evidence in context.

When execution becomes cheap, weak thinking is exposed quickly. Vague goals, shallow hypotheses, and ritualized practices can no longer hide behind process. At the same time, people who can frame meaningful questions, reason across systems, and make informed trade-offs become dramatically more effective.

This is why framing AI as a replacement for Agile—or as something that renders Agile thinking obsolete—misses the point.

AI doesn’t eliminate the need for judgment, prioritization, or intent. It intensifies it.

Agile was never about ceremonies or roles. It was about discipline in decision-making—how to learn, adapt, and commit responsibly in the face of uncertainty. AI doesn’t compete with that. It amplifies it.

The principles haven’t changed. But the environment in which decisions are made has.

That is the context for this series.

In the articles that follow, I’ll explore how this shift shows up inside organizations—how the economics of learning are changing, how administrative aspects of agility are increasingly automated, and how both Agile thinking and AI now play fundamental roles in helping teams and organizations make better decisions.

If this resonates, I’d welcome hearing from you. Many of the leaders and teams I work with are wrestling with similar questions — how to apply Agile principles in an environment where AI is rapidly changing the cost of execution and the pace of learning.

If you’re navigating that shift inside your own organization and would be open to comparing notes, I’d be glad to have an informal conversation. These exchanges often start simply — grounded in real decisions and real constraints — and lead to clarity about what’s worth changing, what isn’t, and where the real leverage now lives.