Bill Wake’s INVEST acronym describes a set of characteristics that are indicative of a good user story. INVEST stands for Independent, Negotiable, Valuable, Estimatable, Small or Sized Appropriately, and Testable (You can learn more about INVEST in chapter 5 of my Essential Scrum book). In this post I want to focus on Testable and illustrate an artifact called Quality Template to describe testable criteria.

Testable Criteria from the Product Owner Perspective

A product owner specifies the testable criteria that a user story should exhibit in order to deem it done. Some people refer to these testable criteria as “acceptance criteria.” I like Mike Cohn’s term “conditions of satisfaction” because I feel it captures the essence of what is being stated: the conditions under which the product owner would be satisfied that the user story is done.

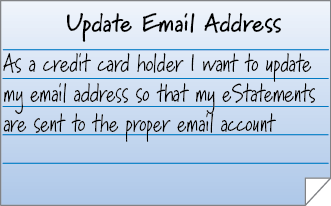

Often time these conditions of satisfaction are simple to state in an unambiguous and testable fashion. For example, consider the following user story:

For this story, the conditions of satisfaction are pretty straightforward. At a minimum, verify that a properly formatted email address has been submitted. And, probably also verify that the same email address has been entered identically in both the primary and confirmatory email fields. In this case, a simple textual elaboration of these “tests” is sufficient.

There are times, however, when a more detailed description of the testable criteria is warranted.

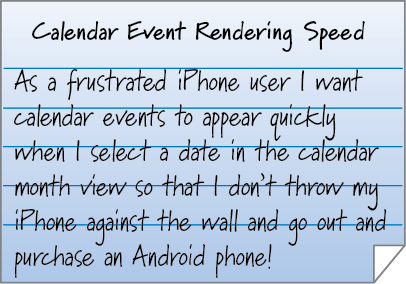

Here is an example story I wanted to submit to Apple years ago when I had an early model iPhone. On my phone, with a medium-size calendar data set, it would take about 20 seconds for the device to show me the events for a particular day when I selected a date from the calendar month view.

So here is the story I wanted to submit:

Now let’s say I submitted that story to Apple. The first question the product owner would want me to answer is what do I mean by quickly? In particular, what test should be run to determine that events now appear quickly?

In this case, a few simple sentences describing the conditions of satisfaction may not be adequate. In these situations I like to use a Quality Template.

Quality Templates

Years ago I borrowed the concept of a quality template from Tom Gilb and his book Principles of Software Engineering Management from 1988. In my 1994 book Succeeding with Objects, I discussed quality templates in detail. Here is a summary.

Quality templates are a way to capture the information necessary for measuring an external product attribute. The table below illustrates the core elements of a quality template.

| Aspect | Definition |

|---|---|

| Name & Description | Unambiguous and meaningful to end user |

| Prerequisites |

Given <Pre Condtions> Conditions that must be met before measurements are meaningful |

| Test | Precise test or measure used to determine value on Scale |

| Scale | Scale along which measurements will be made |

| Mininally Acceptable | Worst acceptable limit on Scale |

| Plan | Desired level on Scale |

| Best | Engineering limit, state-of-the-art, best ever |

| Current | Some existing system for comparison or the current performance number of the feature |

Example – Quality Template for Quickly Show Calendar Events

Here an example quality template that I would associate with the get-calendar-events-to-appear-quickly story.

| Aspect | Definition |

|---|---|

| Name & Description | Calendar event rendering speed |

| Prerequisites |

Given the iPhone is on and the calendar app is displayed in month view When I touch a date on the calendar |

| Test | From the moment the date is touched until the events for that date are painted below the calendar in a manner in which I can interact with them |

| Scale | Milliseconds |

| Mininally Acceptable | 3,000 milliseconds |

| Plan | 3,000 milliseconds |

| Best | 1,500 milliseconds |

| Current | ~20,000 milliseconds |

Based on this template, the test for quickly will be the amount of elapsed time in milliseconds from when a person touches a date in the calendar until the events for that day are presented to the person in a way that she can interact with them.

Notice there are four numbers in this quality template. Two of them I would provide to Apple and two the Apple product owner would have to define. I will provide “Current,” which is about 20,000 milliseconds (about 20 seconds). I will also provide “Plan,” where I define what quickly means on the scale associated with the quality template; in this example, 3,000 milliseconds (3 seconds). So, if Apple can improve the performance from 20 seconds to 3 seconds, then I as a customer would deem this story done.

Apple’s product owner would need to determine the remaining two numbers. “Best” is the theoretical limit of the early iPhone device. Perhaps the Apple engineers tell the product owner that on my device (with a specific microprocessor and memory and a medium-sized data set), the theoretical limit on rendering speed is 1,500 milliseconds (1.5 seconds). As another example, if this quality template were about reliability, then best would be 100% (can’t possibly do any better than that).

The final number is “Minimally Acceptable.” This number is the minimum performance number on the scale that would be acceptable to the product owner. In this example, the product owner is stating that the development team must achieve the planned number (since both are 3,000 milliseconds) or the story is not done.

Incrementally Achieving a Performance Goal

What if during initial conversations with the development team the product owner asks the team to improve performance from 20 seconds to 3 seconds in the next sprint. And, what if the team responds with, “Ummm, that’s not possible!” The question is how to proceed.

Should the product owner allow the user story (with a 3,000 millisecond planned target) to enter the next sprint knowing that the team will not achieve the planned performance level during the sprint? If the team does not achieve the goal, should the product owner just roll the story forward into the next sprint and perhaps the sprint after that until it is completed (the goal of 3,000 milliseconds or better is achieved)? This would be a poor way of performing Scrum!

Alternatively, in this example, the product owner might choose to meet the performance improvement incrementally. Initially the product owner might ask the team what level of performance improvement it can achieve in the first sprint. Let’s say the team believes they can get to 12 seconds from 20 seconds. If the product owner thinks this is reasonable, then the product owner would set minimally acceptable to 12,000 milliseconds on the initial performance-improvement user story. If at the end of the first sprint the team achieves a performance level of 12,000 milliseconds or faster, then the story is done.

In the next sprint, the product owner could choose to put in a new user story that is identical to the first one, except on this second story the quality template would have two different numbers. The first would be “Current,” which is now 12,000 milliseconds (or better). The second would be a new target for Minimally Acceptable, which perhaps for this story would be 6,000 milliseconds. And, of course, if the team achieves a performance of 6,000 milliseconds or better on this second story, the second story would be considered done.

Then in the next sprint there could be a third user story where Minimally Acceptable and Planned are now both 3,000 milliseconds. If the planned number is achieved, then the overall performance objective has been meet and the product owner might consider releasing this feature to her customers.

In this way, a quality template could be used across a collection of user stories as a way of incrementally meeting a difficult-to-achieve-in-one-sprint performance objective.

An additional advantage of incrementally achieving the performance objective across three stories is increased optionality. Here are two examples. First, what if the team after achieving 6,000 milliseconds in the second sprint informs the product owner that the incremental cost of going from 6,000 to 3,000 milliseconds is 40x the cost of performance work done so far? The product owner could determine to declare 6,000 milliseconds the economically sensible performance level for calendar event rendering and not pursue the expensive effort needed to achieve 3,000 milliseconds.

Or, what if a very important business need becomes a priority after the second sprint? The product could choose to release the feature at a performance level of 6,000 milliseconds, then work on the new high-priority backlog item, and then return at a future date to the story to improve the performance level to 3,000 milliseconds.

Summary

User Stories need to be testable in a way that is defined by the product owner. Often times the product owner can easily describe in sentence or two what the conditions of satisfaction are. Other times the description of the test needs to be more precise so there is no ambiguity around whether or not the goal has been achieved. In these cases, I often use a quality template to communicate the testable criteria. Quality templates also nicely support the ability to allow a team to incrementally achieve a time-consuming objective over a collection of sprints.